NVIDIA’s Sonic AI: Revolutionizing Robotics and Teleoperation

NVIDIA's new Sonic AI redefines robotics with cutting-edge motion translation, teleoperation, and multimodal capabilities.

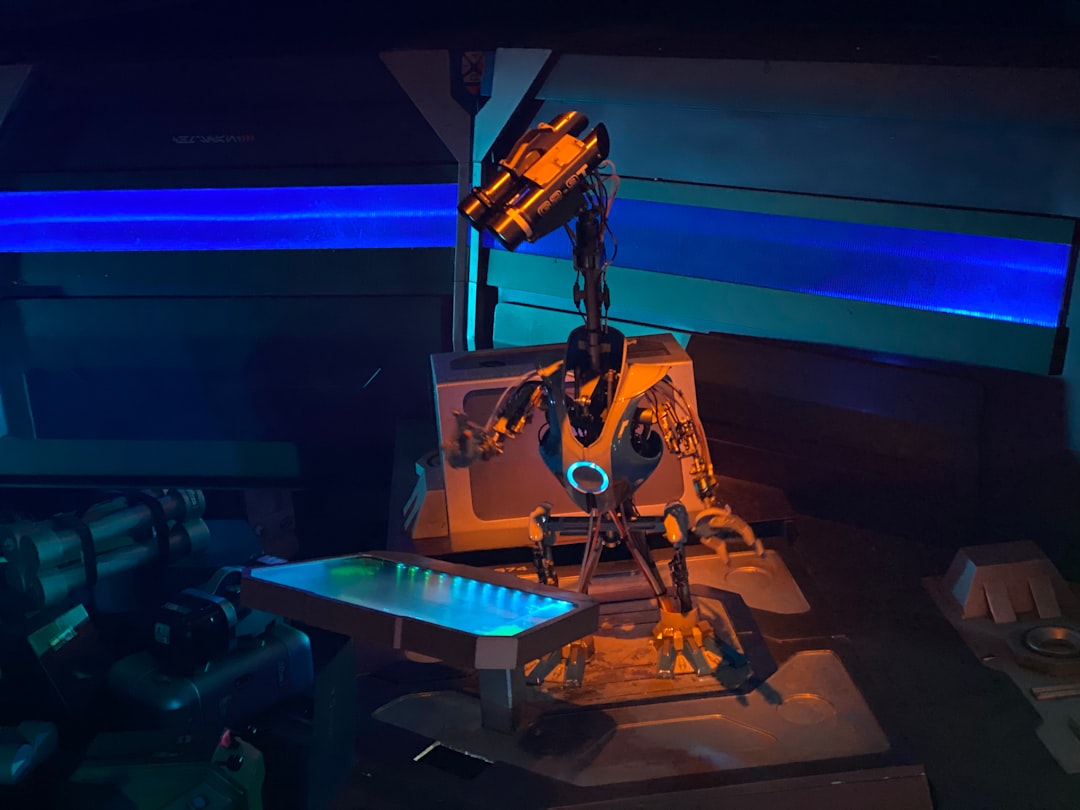

NVIDIA's latest innovation in AI-powered robotics, called Sonic, is poised to revolutionize the field. This new development spearheaded by researchers like Professor Zhu and Jim Fan combines cutting-edge teleoperation with multimodal capabilities, allowing robots to perform tasks that were previously impossible or inefficient. From disaster rescue to extraterrestrial exploration, Sonic’s potential is both exciting and transformative.

What Makes Sonic Special?

At its core, Sonic is not about creating new robots but rethinking the way they are controlled. The technology shifts the focus from hardware to software, specifically the sophisticated AI models that analyze and translate human-like motion into robotic joint positions in three-dimensional space. Remarkably, this system doesn’t rely on action labels for training. Instead, it has studied 100 million frames of raw human motion data to deduce how to perform and transition between tasks naturally.

This means that whether you want a robot to crawl into tight spaces, mimic kung fu movements, or walk with an expressive gait—like happily or stealthily—Sonic can handle it all. Unlike earlier robotics systems, which required extensive trial-and-error scenarios just to enable basic walking, Sonic achieves this with an extraordinary level of precision and stability.

How Sonic Works

The Sonic platform is built on a streamlined neural network consisting of 42 million parameters. While that may sound like a large number, it’s actually quite lightweight in the context of advanced AI models. This efficiency enables Sonic to operate on everyday devices like smartphones and allegedly, even low-power hardware such as a ‘toaster.’

Here’s a breakdown of the system’s process:

- Input Modes: Sonic supports a variety of inputs, including human motion videos, voice commands, music, or written text.

- Motion Generation: Inputs are converted into human-like motion patterns by a motion generator.

- Latent Space Encoding: A human encoder processes the motion into a compact latent space.

- Universal Tokens: This data is then quantified into universal tokens, a critical feature allowing adaptable motor commands.

- Motor Commands: The universal tokens are decoded to produce physical actions in a robot.

Addressing the Challenges

While Sonic’s capabilities are groundbreaking, translating human-like motion to robots isn’t without its hurdles. Robots don’t move like humans—they lack the same natural structure, speed, and flexibility. For example, if a user commands a robot to turn 180 degrees, performing this action too quickly might destabilize the system or even cause damage to the robot.

To address this, the developers implemented what they call a "root trajectory spring model." This feature uses a mathematical function with an exponential term that dampens sudden or overly aggressive movements. As time progresses, this function decays smoothly, preventing oscillations and ensuring the robot reaches its target position safely. However, striking the right balance in dampening is tricky—too much of it and the robot becomes sluggish, too little and it risks self-harm.

A Multimodal System with Real-World Applications

One of the most exciting aspects of Sonic is its multimodality. The ability to receive input from diverse sources, such as music or textual commands, significantly expands its range of applications. For instance, a robot could be teleoperated to enter spaces that are dangerous or inaccessible to humans—making it ideal for rescue missions to locate survivors trapped under rubble. Future developments may enable Sonic-powered robots to perform tasks in outer space, eliminating risks to human astronauts.

Robots powered by Sonic can also handle simple commands independently. Want a robot to mow your lawn? No problem. Tell Sonic, and the robot can get the job done without requiring direct teleoperation. Moreover, Sonic’s expressiveness is a unique feature, allowing it to convey emotions or conditions (like walking as if injured), which could be vital for simulations or specific use cases.

Compact Yet Powerful

What’s particularly impressive about Sonic is that its training required immense computational resources—128 GPUs and three full days—but the end product is astonishingly compact. Once trained, the AI model runs effortlessly on low-power devices. This balance between high upfront computational work and low operational requirements ensures that Sonic’s capabilities are accessible to a wider audience.

Moreover, NVIDIA has committed to making all its showcased models free and open-source. This commitment to open research democratizes access to advanced robotics, allowing researchers, hobbyists, and developers to explore and innovate further.

The People Behind Sonic

The Sonic project owes much of its success to the leadership of professor Zhu and Jim Fan. In just two years, Fan has established a humanoid robots lab at NVIDIA, delivering one breakthrough after another. This rapid pace of innovation underscores the transformative potential of their research.

Looking Ahead

Sonic represents an early step in what could soon become a ubiquitous branch of robotics and AI. While the project remains in its infancy, the implications are significant. As the technology matures, future iterations might extend even further into practical realms—folding laundry, preparing meals, or engaging in highly specialized tasks.

More broadly, Sonic underscores the value of coding “good thinking” into machines. By compressing vast, messy datasets into abstract tokens, the AI achieves a distilled understanding of human motion. This principle could guide developments in other fields, from natural language processing to autonomous vehicles. Perhaps just as importantly, it serves as a reminder to find simplicity and clarity in both technical and human challenges.

NVIDIA’s Sonic AI has opened up exciting possibilities for robotics. It's not an endpoint but a beginning, setting the stage for what’s next in a rapidly evolving field. With its blend of innovation, accessibility, and practicality, Sonic has the potential to deliver on the long-held promise of robots that seamlessly integrate into human life.

Staff Writer

Chris covers artificial intelligence, machine learning, and software development trends.

Comments

Loading comments…