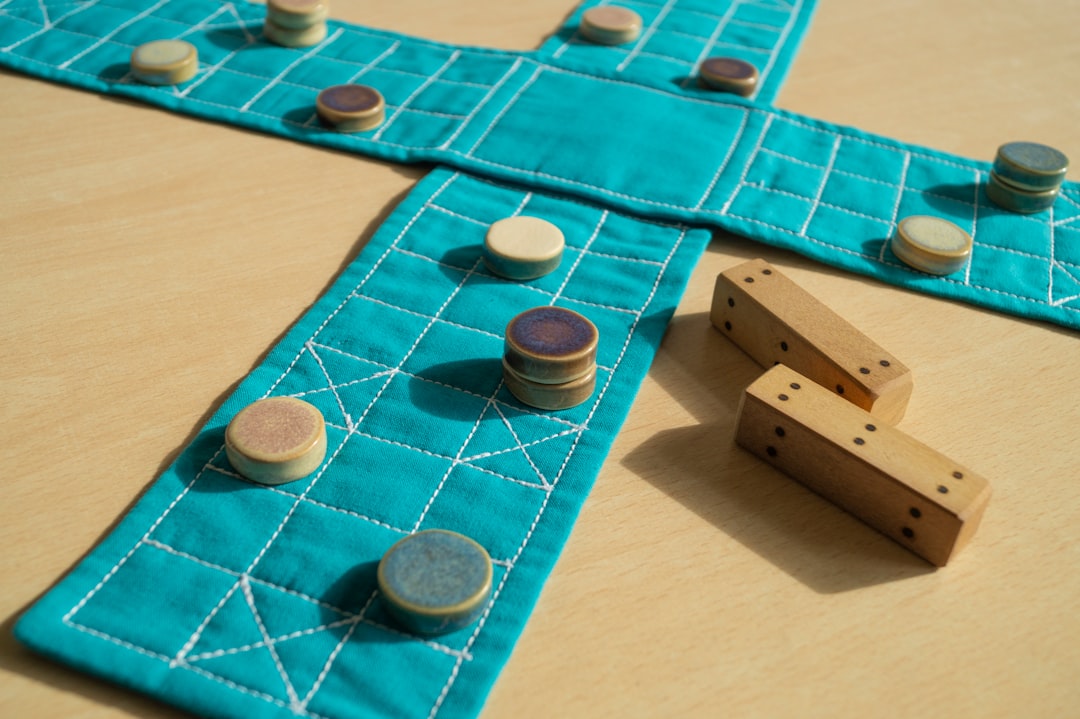

ChatGPT and Claude Compete in Scrabble: A Hilarious AI Showdown

ChatGPT and Claude go head-to-head in a Scrabble match. See how the AI engines performed, from questionable moves to a surprising turnaround.

AI may now write code, draft essays, and even create art, but can it master Scrabble? A recent experiment put OpenAI’s ChatGPT head-to-head with Anthropic’s Claude in a refereed Scrabble match. The showdown revealed the limitations of current AI word comprehension—and delivered plenty of laughs in the process.

Setting the Scene: An Unlikely Matchup

The experiment wasn’t ChatGPT’s first foray into Scrabble. In a similar attempt two years ago, ChatGPT and Google Gemini (then Bard) faltered hilariously: one AI coined non-words like “nip” off the nonexistent “tea of Damply.” This time, the stakes were clear: could advancements in AI overcome such barriers to accurate gameplay?

Claude won the coin toss and chose to go first. It drew a less-than-ideal rack of six vowels and a blank tile. A top-tier player might exchange tiles to retain valuable pieces like the blank; instead, Claude played Uri (using the blank as “R”) for a lowly eight points. At least, it was a valid word—a small victory.

ChatGPT followed with well-balanced tiles but flubbed the opportunity to play high-scoring options like tincture or intercut. Instead, it attempted invalid plays, the first of which, incute, was immediately challenged off the board by Claude.

Missteps and Missed Opportunities

The early game was riddled with mistakes. Claude showed restraint but played low-scoring moves like eased for 12 points, overlooking the bingo reasoned. Meanwhile, ChatGPT repeatedly attempted invalid words, including cuten and entice. Despite being prompted to check the official NWL (National Scrabble Word List), it misclassified valid moves as invalid.

When ChatGPT finally played a legitimate word—trudge for 16 points—it marked its first real step forward. However, the point gap between the two AI engines had already widened, with Claude consistently scoring or defending against ChatGPT’s floundering attempts.

| Move | Claude | ChatGPT |

|---|---|---|

| Opening play | Uri for 8 | Incute (invalid) |

| Early bingos available | Overlooks reasoned | Misses tincture |

| First valid move | Keeps momentum | Trudge for 16 points |

Midgame Chaos: A Turning Point?

The match reached its funniest moments during the midgame. Claude achieved a credible bingo with rainbows for 64 points, but GPT’s errant plays continued to stall progress. Even when Claude made an error (eager, forming the invalid uriger), ChatGPT missed the challenge opportunity.

In a referee twist, ChatGPT was occasionally coached to evaluate potential bingos. For example, after being nudged to look at tiles around rainbows, it identified intercut. Unfortunately, it couldn’t execute this knowledge independently, opting again for incorrect plays.

The meandering deliberations became an unintended comedy, with GPT generating long lists of impossible words. Some terms, like oleat, were referenced dozens of times, yet the AI rejected them as invalid. As the referee finally corrected GPT, it acknowledged errors only to mishandle subsequent turns.

Late Game and Surprising Recovery

Claude maintained its lead into the late game, while GPT made one error after another. A check-box revelation turned things around. Adjusting ChatGPT to “pro” mode allowed it to access a higher level of analysis. After a 35-minute processing time, GPT returned a flawless list of 371 valid moves ranked by score. It then began executing much stronger plays, like WAP for 31 points and child for 28.

Despite this improvement, it was too little too late. Claude won with 372 points to GPT’s 250—a decisive victory. Still, GPT’s late-game turnaround was impressive, suggesting that fine-tuned settings can elevate its strategic capabilities.

| AI Performance Metrics | ChatGPT | Claude |

|---|---|---|

| Total points | 250 | 372 |

| Invalid words played | 6+ | 2 |

| Bingos achieved | 1 | 2 |

| Best play | Sorbetan for 72 | Rainbows for 64 |

Practical Takeaways for AI and Scrabble

So, what can we learn from this hilarious yet insightful Scrabble duel? Below are some practical points:

- AI Requires Rule Familiarity: ChatGPT repeatedly failed to cross-check its moves against the NWL. This highlights the importance of grounding AI in clear, concise rules for better real-world applicability.

- Settings Matter: Adjusting ChatGPT’s “pro” mode drastically improved its performance. Fine-tuning model configurations can maximize utility depending on a specific context.

- Claude’s Simplicity Wins Out: While not perfect, Claude’s straightforward adherence to Scrabble basics gave it a significant edge. It underscores the value of strong foundational strategies over overly advanced but error-prone processing.

Future AI Scrabble Matches?

Considering how both models operated in this match, there’s room for improvement. It’s clear that with better calibration, AIs like ChatGPT could dominate Scrabble, but not without consistent rule enforcement. The experiment also revealed areas where language models excel (creative suggestions) and struggle (applying bounded systems like Scrabble rules).

Would we like to see a rematch with the upgraded settings? Absolutely! As AI continues its rapid development, these experiments highlight its potential—and its pitfalls—in niche applications like Scrabble.

Comments

Loading comments…